Atolio’s sovereign AI-powered enterprise search platform is finally safe and secure enough for the public sector. In this webinar, hosted in partnership with Carahsoft, we broke down some of the non-negotiables for deploying Gen AI as a high ROI asset – not as a compliance or security risk.

We advised on non-negotiables that tend to get overlooked by vendors, especially when dealing with public sector organizations. These include:

- Sovereign AI for Enterprise Knowledge: How do you ask questions over your organization's knowledge and receive intelligent answers, without your data ever leaving your control?

- Access Control and Enforcement for AI and RAG: How do you protect sensitive data with document, object and system-level permissions that are enforced at query time, to ensure that AI and RAG responses only surface content that users are authorized to access.

- AI Retrieval That Drives Mission Results: How do modern AI and retrieval techniques leverage contextual signals like the collaboration graph to generate highly precise mission-ready answers through intelligent RAG workflows?

Atolio aims to help organizations build a secure AI foundation that protects their data, while giving teams instant, trusted access to the information that they need exactly when they need it.

Watch the full webinar or read on for the full conversation, including Q&A.

We've built secure AI for the public sector.

What we wanted to do is build a unifying layer across silos of knowledge that gives personalized answers to our users from fragments of information spread across disparate systems – and give personalized answers based on who they are, who they work with, and especially what they have access to read at the moment when they ask the question.

.avif)

Six years ago, when we started the company, people thought about this problem as Google for work.

“How do I search?

How do I find a document that I'm looking for that is relevant to what I want to know?

How do I find it across this sea of information, just like I have the ability when I go to google.com?”

In many ways, this is at the intersection of our experience building Splunk and PagerDuty. Splunk is obviously a highly scalable search software running on-prem in the enterprise. And at PagerDuty we helped support many of the world's largest internet services.

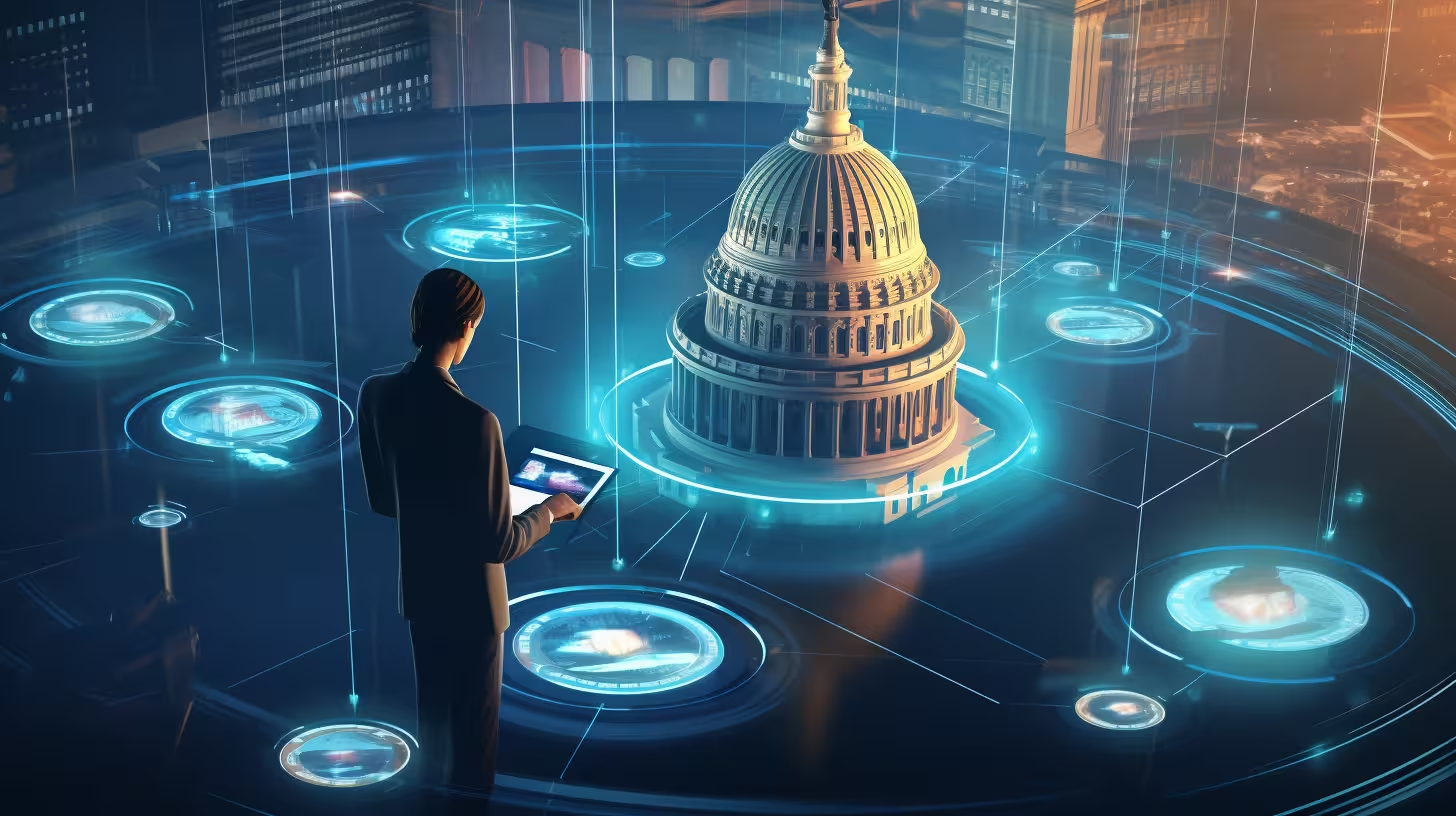

You've probably seen this quote before.

“If HP knew what HP knows, we'd be three times more productive.”

This is from Lou Platt. The funny thing is Lou retired as CEO of HP in 1999. So this illustrates how long this problem has existed.

People have called this the holy grail of enterprise problems. It's fundamentally a human problem that's endemic to all large and complex organizations. How do we take the knowledge that's written down, and the location is known only to the people who worked on it, and how do we make it findable to everybody who has access to it in a useful way, so that they can make better decisions and improve readiness?

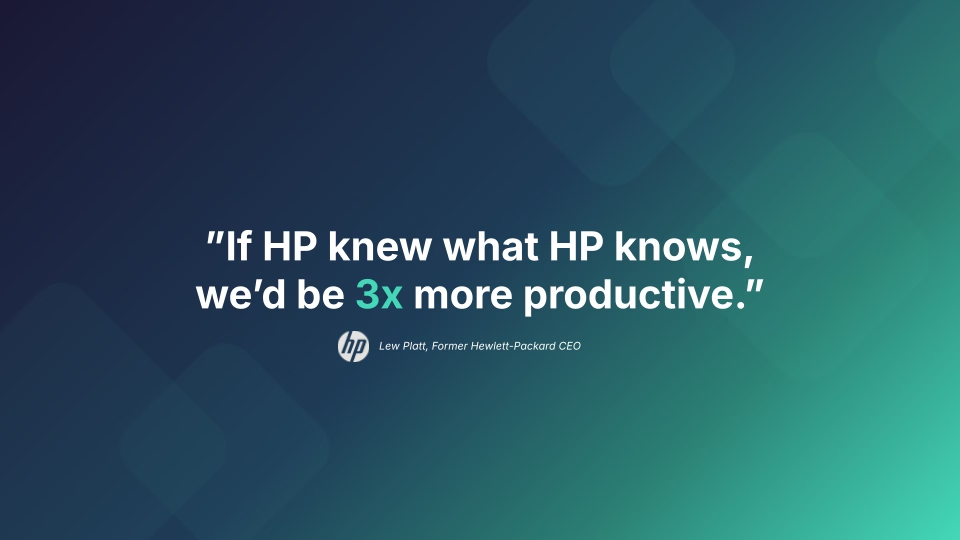

Every organization struggles with this. The cost is real.

It's not just that the knowledge is siloed, it's also fragmented. A veteran analyst might spend 20% of their day just looking for the information that they need to do their job. In the public sector, government agencies are some of the world's largest repositories of institutional knowledge. And yet, this data is trapped in these disconnected silos. Think SharePoint, Jira, Slack, legacy databases that have been built, and so on and so forth.

Everybody feels that this is an acute pain, and companies have been trying to solve this for decades now.

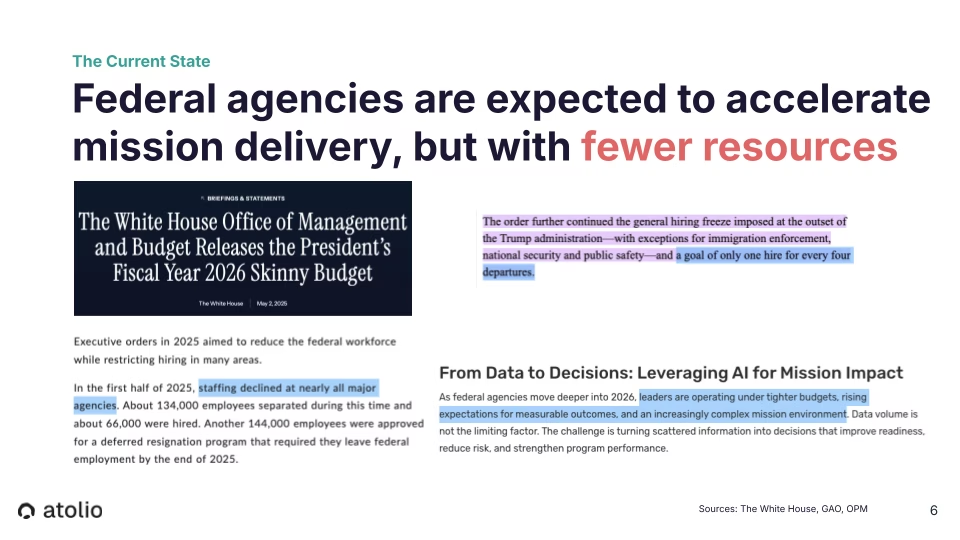

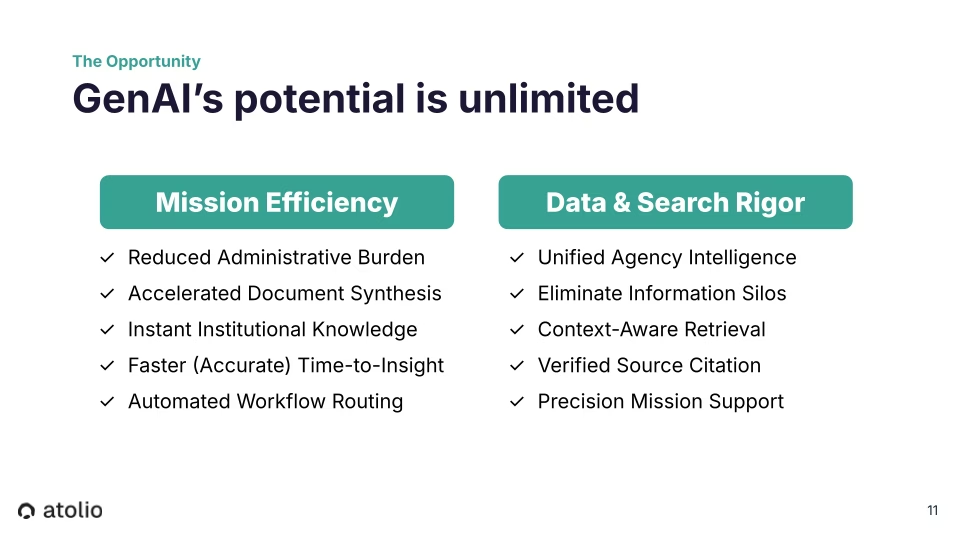

Federal agencies are being asked to do the impossible, to accelerate mission delivery with fewer resources while managing an explosion of data. The amount of data isn't the only problem though: it's this fragmentation. You have the volume of data, the data is scattered all over the place, and it needs to be gathered into one place so that you can make better decisions, improve readiness, reduce risk, and strengthen the performance of these programs.

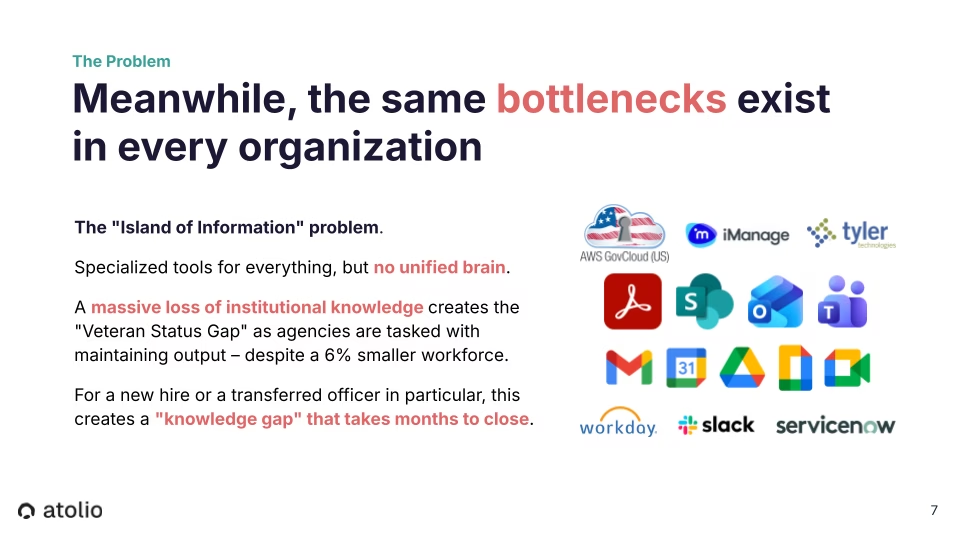

We call this the “island of information” problem, where you have these specialized tools, and these bottlenecks exist in every organization. People are working in the Microsoft ecosystem and Office365, talking on Teams, you have Google applications, you have ServiceNow and Workday and Salesforce. You have these specialized platforms for different use cases, but there's no unified brain that spans these and offers a way to make this information accessible in a useful way.

In fact, the name of our company, Atolio, comes from “atols” (I'm a scuba diver). We think of these little islands of data, and we wanted to find a way to unify these and actually unlock all of the value that's trapped in this knowledge. One example of this is as aging service members retire, agencies need to maintain the output with unfortunately a smaller force, and find a way to take the knowledge that's written down in all those places – when it's written down. But if you think of a desk drawer, the drawer that this knowledge is stored in is in the heads of the officers who are retiring. How do you make that information accessible to new officers and help close this knowledge gap that can take months?

GenAI has moved from science experiment to mission imperative. We've all heard about this. But the AI gold rush of the last few years has created massive security debt.

The AI that corporate America is using has a complete lack of security. In many cases, it's completely unworkable for public sector organizations. We talk to Fortune 500 CISOs that are still trying to keep employees from putting company information into ChatGPT. In 2026! They’re still very much dealing with this challenge.

The cost and the risk of shadow IT – personnel using public cloud models because the barrier to access secure infrastructure is too high – is unacceptable to public sector organizations. Enterprises’ missteps are out of the question. Unfortunately, corporate America's AI standards have already led to national security events.

On the plus side, AI can do amazing things today. It can solve real problems. There are real working production solutions.

One concrete example is what Atolio calls “instant veteran status.” Every team member can access the full scope of knowledge they're authorized to see wherever they're working, including on secure phones, and get a verified, secure, accurate answer in seconds from across dozens of connected systems.

Our view is that public sector AI should not require a trade-off between intelligence and security. General AI tools are risky for government because they often leak IP to the model providers – or, they're running on public clouds themselves.

There's a lot that goes into being secure and private. We've thought about all of this from the very start of our company. Before we launched the first version of our product, we talked to 762 large organizations. And the number one thing we heard is that any solution to this problem absolutely has to keep all of the data and the stack off of the public cloud entirely – meaning no data leaves your control, ever.

The complexity of being able to deploy this into your own infrastructure and leverage these models means solving several fairly deep technical challenges.

- Obviously, privacy, security, and data integrity are table stakes.

- Then you have access controls. This includes granular permissions, role-based access, understanding at the object level who can access each document or conversation, and understanding how those permissions change in real time.

- Then you have data privacy, including data residency options, and clear data usage and retention policies.

- There’s also protecting your IP, using open-source LLMs or LLMs that are hosted by the private clouds where nothing goes to the public cloud when using AI.

- Data security involves encryption at rest and in transit, as well as MFA or SSO

- And then there’s auditability, which involves logging, audit trails, and reporting for compliance and investigations.

What you need is a combination of:

- Sovereign data control

- VPC or native deployment (think GovCloud, physical hardware, major private clouds, and air-gapped environments)

- Object-level access enforcement

- Permission-aware answers

- Audit-ready AI governance

Non-Negotiables for Deploying GenAI as a High-ROI Asset

Now, we'll break down some of the non-negotiables for deploying Gen AI as a high ROI asset – and not as a compliance or security risk. We'll address many non-negotiables that tend to get overlooked by vendors. These include:

- Sovereign AI for Enterprise Knowledge: How do you ask questions over your organization's knowledge and receive intelligent answers, without your data ever leaving your control?

- Access Control and Enforcement for AI and RAG: How do you protect sensitive data with document, object and system-level permissions that are enforced at query time, to ensure that AI and RAG responses only surface content that users are authorized to access.

- AI Retrieval That Drives Mission Results: We’ll talk about how modern AI and retrieval techniques leverage contextual signals like the collaboration graph to generate highly precise mission-ready answers through intelligent RAG workflows.

All of this will help you build a secure AI foundation that protects your data, while giving your teams instant, trusted access to the information that they need exactly when they need it.

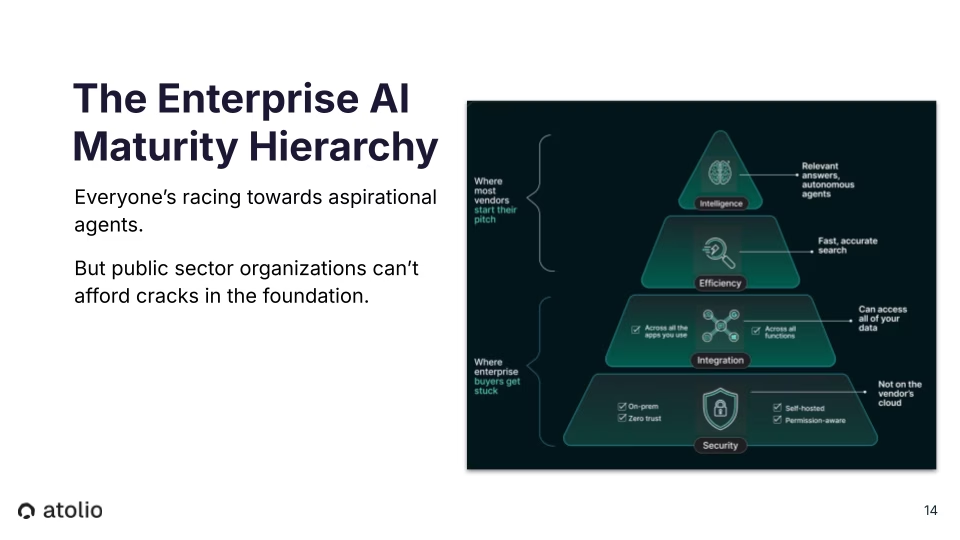

The enterprise AI maturity hierarchy reveals a pattern playing out across government and enterprise: organizations racing toward autonomous agents without securing the foundation first. The goal isn't a chatbot. What you're looking to build is a foundation for organization-wide intelligence.

But security and permissions are the base of that pyramid, and skipping them leaves the entire structure unstable. Sovereign AI enterprise search starts at the bottom: on-prem, self-hosted, fully permission-aware, on private cloud or physical hardware.

To win, to solve this, you have to work your way up and solve the base of this pyramid first. On-prem, self-hosted, fully permission-aware, on private cloud or physical hardware.

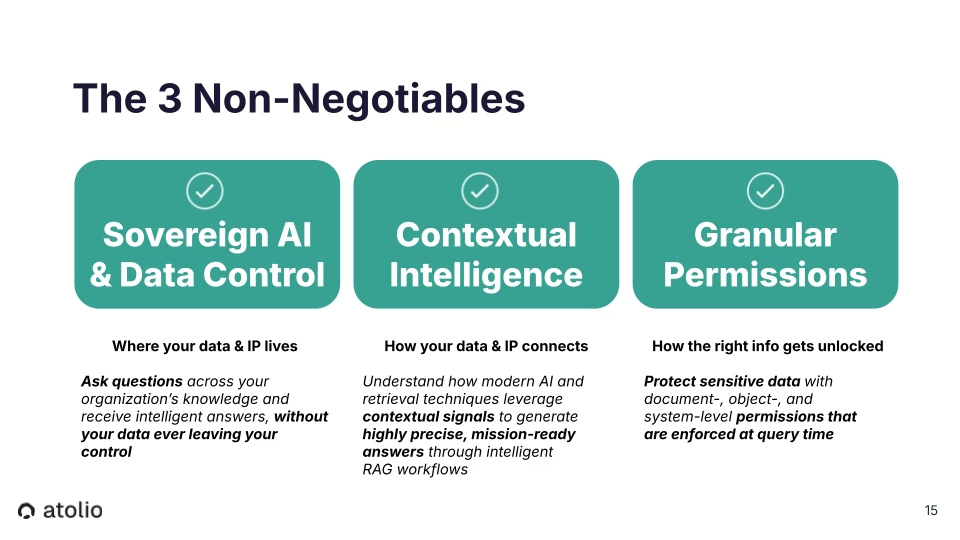

Overview: The 3 Non-Negotiables

If you're evaluating an AI vendor, first look at these criteria. If they can't check these three boxes, they're not an asset, they're a liability.

- Sovereign AI and Data Control: Can you ask questions across your organization's knowledge and receive intelligent answers without the data leaving your control?

- Contextual Intelligence: Do the modern AI and retrieval techniques leverage the contextual signals to generate the precise answers? Do they actually use the graph of how people collaborate?

Granular Permissions: Do they protect the data with document object and system level permissions, such that the user only receives an answer from what they have access to at this moment.

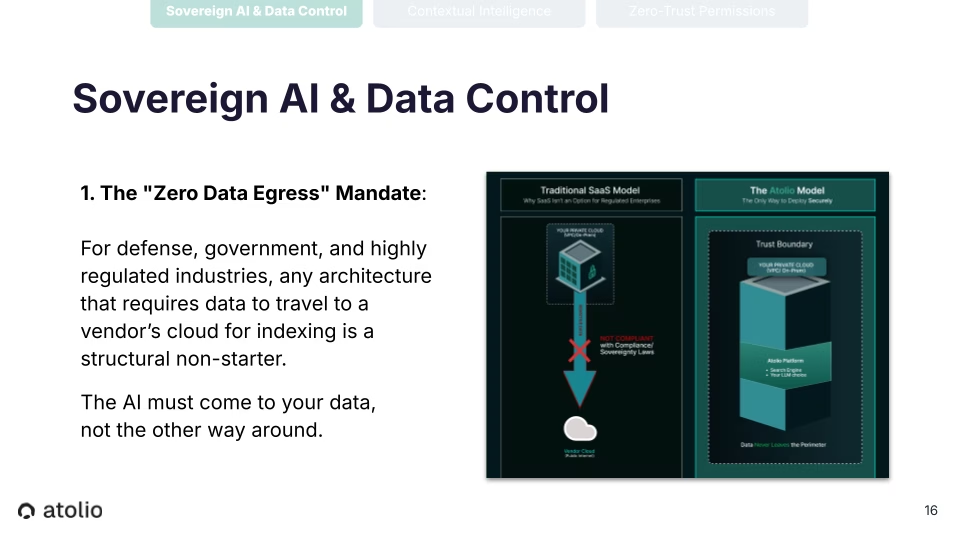

Sovereign AI & Data Control

Now for highly regulated entities like the Air Force, AI must come to where the data lives and not the other way around. Step one is zero data egress. Bring the system to the data, ask questions across the knowledge without the data leaving the control. That’s truly sovereign AI and full data control.

VPC-native deployment is essential for data residency compliance and IP protection. The entire stack – connectors, search index, and LLM orchestration – must run within the agency's own environment. True zero-trust and sovereign AI means the entire system operates fully self-contained, with zero dependency on the public internet. Nothing should leave the wire.

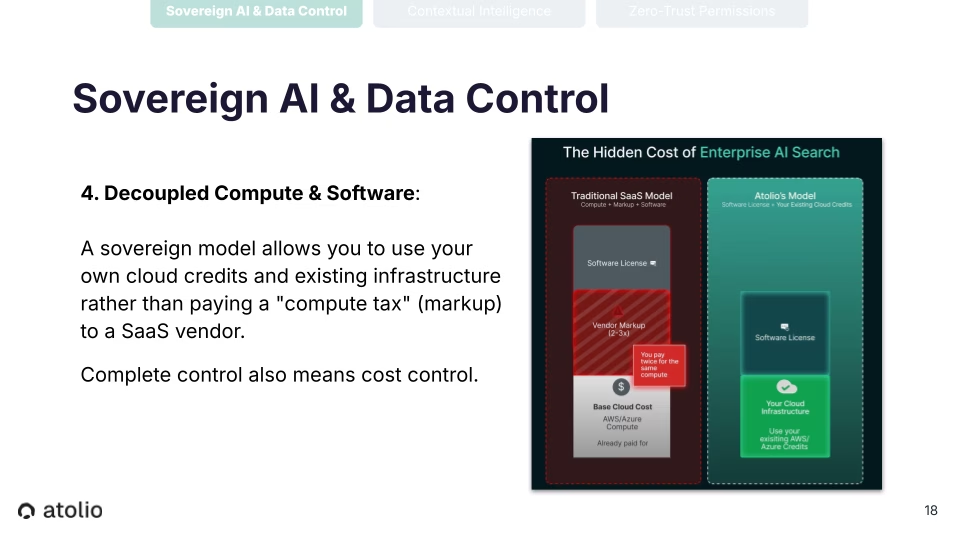

Atolio decouples compute from software, so agencies can leverage existing GovCloud or private cloud spend commitments for both the infrastructure and AI, rather than paying a vendor markup. Atolio charges for the software only; organizations use their own cloud credits for infrastructure and AI compute costs. What we do not do is we do not bundle these costs and mark up the infrastructure and AI costs. Complete data control also means complete cost control.

Contextual Intelligence

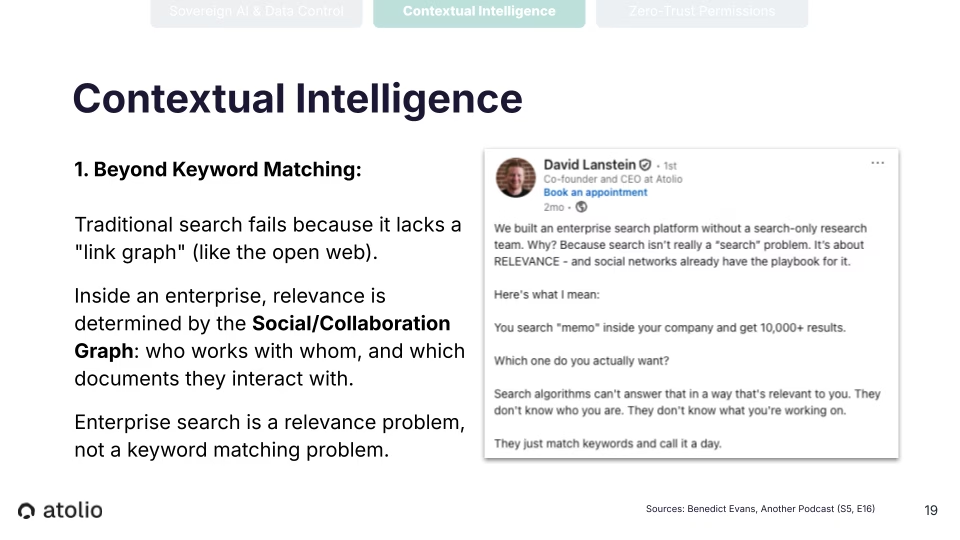

Traditional enterprise search has never solved relevance – not even Google. In large organizations, there's no equivalent of PageRank to surface the documents that actually matter to a specific person. If I search for “project notes,” I get 10,000 results that say project notes. The missing piece is knowing which ones matter to you, based on who you collaborate with and what you're currently working on. Accurate answers require understanding the collaboration graph of who somebody is, who they work with, and how those relationships change over time. You need to understand that in order to understand which project notes, in this example, or which documents actually matter to the individual, to the mission.

Answering a question requires the system to interpret natural language through four layers, metadata, semantic filters, time, and identity. It needs to understand what we call the “we” problem. When a person asks a question like, ‘Where's the spreadsheet we worked on last week?’ The system needs to be able to understand who that person considers to be “we,” who is their working team at that moment, not just who's on the org chart. And then provide a personalized answer to that person based on who they happen to be working with at that point in time, without the user having to explicitly specify ‘I want to involve these three folks.’

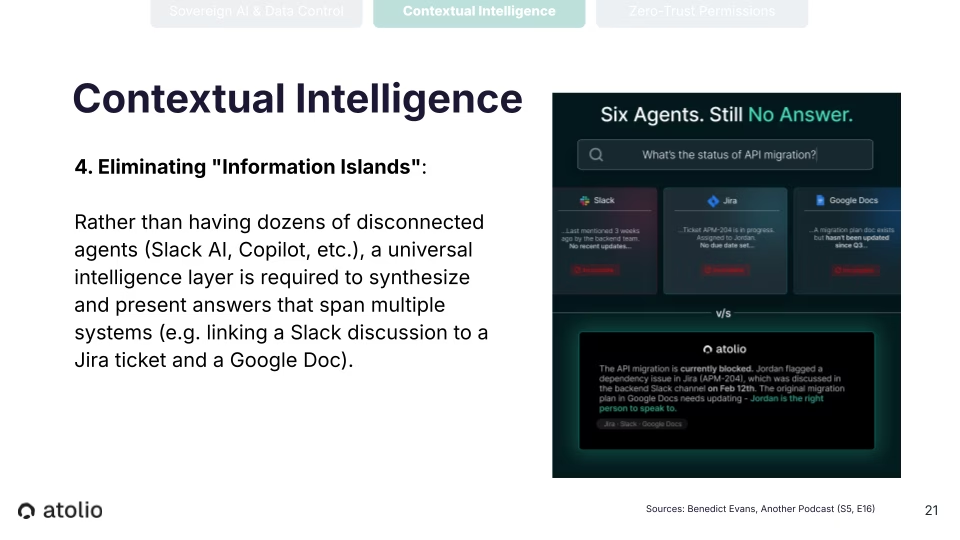

Which brings us back to these information islands: Copilot and Slack's AI bot and so on and so forth. Rather than having dozens of disconnected agents, what's necessary is a unifying intelligence layer across all these systems that can give answers from the multiple systems and really unify these vendor platforms. Many times, the problem is not that you want to find the one document that can answer the question and everything is extremely organized. Many bits of knowledge are involved in answering a question from email, a little bit is in SharePoint, a little bit might be in JIRA, a little bit is in Slack. So how do you unify these platforms? Take all of these fragments of information that all have a clue to the answer and stitch them together, and give a coherent answer from across all of those source systems in one place.

Zero-Trust Permissions

We’ve talked about permissions. We have the object level, the document level, and the system level permissions.

- It's critical for the system to mirror the source document, meaning a user can only get an answer – a piece of content can only help answer a user's question – if they can read it at the moment when they're running the query.

- These permissions have to be baked directly into the search index. Any solution needs to understand across all of the tens or hundreds of millions of documents, conversations, and emails what set of users can read this thing at all times.

And you need to have a real-time understanding of those permissions. It needs to be built directly into the search index. That's the only way that a RAG system can respond with personalized answers: if it understands it also in real time. Not just what the content is, but who can read each snippet of content at all times.

Intelligence is a liability if it does not strictly respect the “need to know” at the data layer. According to a recent IBM study, among organizations that experienced an AI-related security incident, 97% reported that they did not have proper AI-specific access controls in place. 63% of those organizations had no formal AI governance policy at all. And as just one example of the shadow AI penalty: that breaches involving shadow AI, so unsanctioned AI tools usage by employees, oftentimes public cloud systems, cost organizations an average of $670,000 more than those with governed AI environments.

The question is not whether public sector organizations will adopt AI-powered enterprise search, it’s if any of your peers already are. The question is whether you can afford to do it given the risks on a public cloud. If proprietary information and internal assets are part of your advantage, the answer is no.

Atolio: the "One Box" that turns siloes into a secure, sovereign mission advantage

Atolio is the only AI-powered enterprise search platform that delivers the productivity gains your teams need and the experiences that they expect from public AI solutions – without requiring you to hand over your sensitive assets to someone else's infrastructure. You should be able to keep your data to yourself.

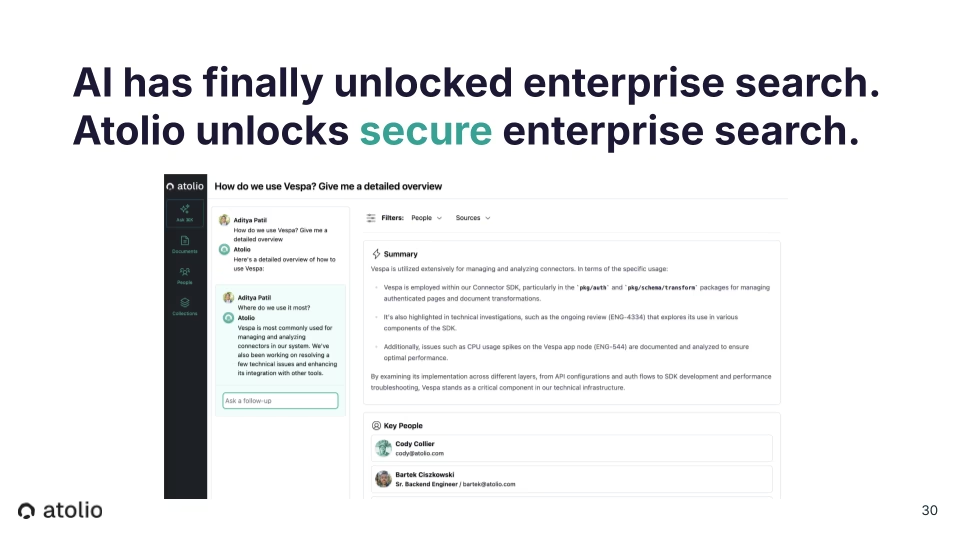

Atolio was built specifically to address the non-negotiables most vendors ignore. Our "one box" turns fragmented, siloed data into secure, sovereign mission advantage. Deployed at the US Air Force for Platform One, Atolio’s secure, self-hosted enterprise search platform solves the full stack: on-prem deployment, secure connectors across hundreds of systems, granular object-level access control, collaboration graph-driven relevance, and unified question-answering – all within a single, self-contained system.

You need to build search, and then you need to build relevance and understanding through things like the collaboration graph. And you have to put all of these components in one system to be able to unlock this question-answering expertise in a secure and useful way.

Why Atolio is preferred

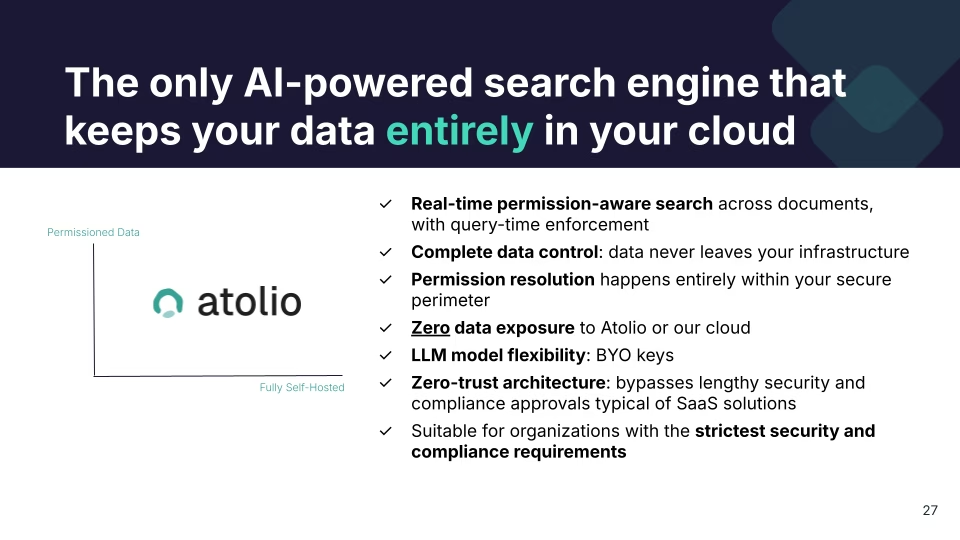

Our unique value is that we are the only product that sits in the quadrant of fully self-hosted and also understanding the access patterns of who can access which object at the document-, object-, and system-level permissions. It needs to understand who has the right to see each thing, it needs to mirror complex government hierarchy and security clearances, and navigate all that complexity.

What does this look like?

- Real-time permission-aware search across documents with enforcement at query time

- Complete data control so the data never leaves your infrastructure

- Permission resolution happening entirely within your secure perimeter

- Zero data exposure: nothing goes to our cloud, nothing goes to the public cloud, you control everything

- LLM model flexibility: you can bring your own keys and bring your own models

- Zero trust architecture, making it easy to deploy because we don't have to jump through a lot of the hoops that you would have to jump through if you were to use a traditional SaaS offering.

- Suitable and built for organizations with the strictest requirements in mind.

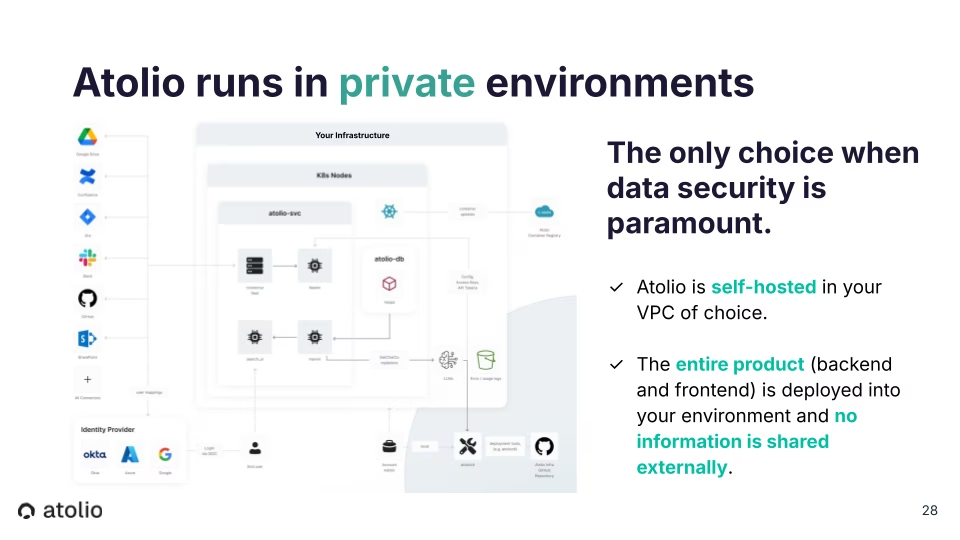

Our architecture: Atolio runs in private environments

We can go into more depth if anybody has questions, but this is our architecture. We run on your private cloud of choice, physical hardware, in air-gapped environments, and on GovCloud like we do for the US Air Force.

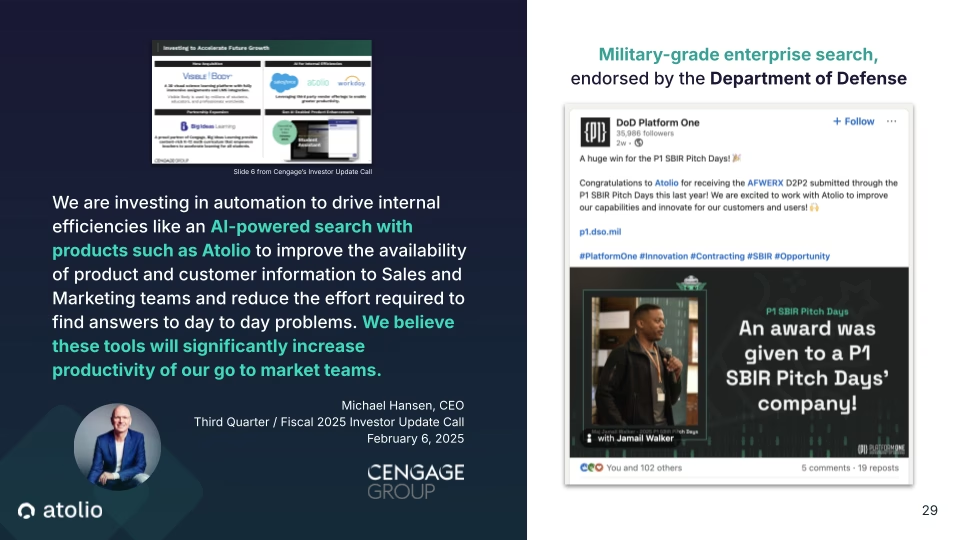

It’s worth noting that we've been called out on earnings calls as a core bet. There was a slide that Michael Hansen, CEO at Cengage, shared about their big AI bets: Salesforce, Atolio, and Workday. And then as mentioned, we won direct to phase two with the US Air Force. We’re proud to support the Platform One organization and the Department of Defense.

Demoing the platform

I’d like to show you the platform before we open it up for questions. So I'm going to ask it to “Help me prepare to lead this webinar.”

We built the application that we always wanted when building Splunk and PagerDuty. We wanted a way to surface knowledge across all of these places. And if the number one thing that we heard was ‘any solution has to be fully on-prem,’ the number two thing we heard was ‘who knows about this thing is just as important as where's the thing.’ Because there are some times when you just want to find the person who knows about the topic, to be able to reach out to them over Teams, or go sit down with them to ask questions and gain context really quickly.

Atolio pulls from across an agency's connected systems – email, calendars, internal platforms, Salesforce – and surfaces a unified, personalized briefing in seconds. The result is a consumer-grade experience with enterprise-grade security: contextually relevant, permission-aware, and entirely contained within the agency's secure perimeter. That mirrors the kind of experiences that world-class consumer products have – without exposing any of my information to the public internet.

I'd like to pause here. I'm happy to answer questions.

Q&A

Q: What is the FedRAMP authorization level for this solution? What DoD Impact Level authorizations do you have?

A: David made references a couple of times to the work we're doing with the Air Force. Through that work, Atolio will go through a number of DoD impact level authorizations. Most likely two, four, and five, possibly beyond into the classified space.

The other thing is, as David mentioned a number of times, Atolio was built from the beginning with security in mind and it's self-hostable. So we are deploying Atolio in GovCloud for clients. So deploying in a FedRAMP authorized security boundary with an accelerator is very achievable.

The technology is all the same. We believe that we could get FedRAMP low, moderate, or high relatively quickly, probably within three or four months, which would be much faster than other vendors who created a product and bolted on security later.

Q: Does the system automatically sync with existing AD or LDAP permissions, or do we have to rebuild and replicate all of those permissions manually within Atolio?

A: That's a good question. The whole premise behind the product is you don't have to manage anything around permissions. The source of truth is always the data that is pulled from the data sources that we're indexing from. So the system connects directly with Active Directory, or Okta, or whatever IDP your organization is using. We pull the directory from there, and we use that then to map back to the users in each individual source. And then those sources define the permissions for who can see what in the underlying index. The advantage of that is there's nothing else to configure. If you have access to it, then you can see it in the index. And if you don't have access to it, then you do not. If you subsequently change a permission on a document in the underlying source, it's reflected in the index in Atolio as it streams in. Very straightforward. But the source of truth is always Active Directory and the underlying source.

Q: If a user’s access to a document is revoked in the source system or permissions change, how quickly does that change reflect in Atolio’s search results?

A: That depends on the source and how it streams data back to us. Most sources these days have a streaming model, so we can get data back within seconds generally. It may be minutes for some other sources, but we're only constrained by how quickly the upstream system can send the updates back to the product. But for many systems, that's seconds. And that's seconds regardless of whether the content has changed or whether the permissions have changed.

Q: Which LLMs do you support? Are there LLMs you don’t support?

A: Everything's changing every day and we're picking a handful of best of breed to support, but do you want to talk about that?

It's very much client driven in terms of which models are most useful to a particular client and which ones are most performant at this moment in time. One of the great advantages of the product is it's pretty model agnostic. So instead of having to pick a product by a model provider and be completely locked into that model, even if it's surpassed in performance by another model subsequently,you could pick model A today and switch to model B tomorrow if that turns out to be better. At the moment, we have four or five models that are first class that we support right now. We really optimize for each one, but it is a very fast moving space, so it could be Anthropic’s models, OpenAI’s models, Gemini, and open models, or also support if you are running your own compute locally.

A key point to emphasize here is that we are not training the model on this data. It's not like we're taking a model, feeding all of the content in, and trying to have the AI enforce permissions. That, at least for the foreseeable future, is not a viable way to provide security. The only secure way to provide the service is to have a search and relevance subsystem understand: of the universe of 10 billion documents, conversations, emails that you may have at your organization, what are the 500,000 this particular person can access at this moment? And of those 500,000, what are the 10 to 15 fragments of information that we think are most likely to be able to answer their question? And so the LLM only gets those 15 fed into the context window. And again, this can be an open source model – however, you're comfortable in terms of a security model, including fully air-gapped – but there's no model where we're training all of this data and having the model do the guardrails. That's not the current reality.

We're touching the sovereign aspect here. The entire product lives inside a Kubernetes cluster, and that cluster could be running in AWS, Azure, or GCP. But it could also be a cluster running on your own hardware, for instance. When we say sovereign, we mean your security perimeter. So we as a company have no access to that cluster, nor do we need it. The operation is self-sufficient. Then for the large language model, the inference model, if you're running an AWS, you'd connect out to the Bedrock deployment of that model hosted by AWS, again remaining inside your security perimeter. But for an air-gapped deployment, if you have sufficient hardware on-site, enough GPU on site, then you can host your own model and be completely off-cloud altogether as well.

I was just meeting with the Head of Collaboration of a highly siloed and security-conscious technology company yesterday. For them “sovereign” means we have a building, only certain people can go in this building, and we develop certain products in this particular building. So for them, they would create a deployment of Atolio just for the folks in that building that only have access to those systems, and everything is entirely firewalled – even from the rest of the company.

Q: Is our data used to train or fine-tune the underlying model, or is it only used for RAG (Retrieval-Augmented Generation)? If the former, does anything (data, metadata, models, etc.) get sent back to Atolio?

A: To the fine tuning point here, nothing comes back to Atolio. Nothing leaves. At the point David was putting the example question here into the product, that user interface is served up by a machine inside the cluster, inside your environment. It's deployed to another service inside that environment, the data store, the index, etcetera is also inside that cluster too. The inference layer reaches out to Bedrock running inside the cloud account that you manage yourself – it doesn't come out to us. There's no fine tuning, there's no other data leaving. It really is completely private.

Other providers have had the idea that they would create this cross customer model. Part of the way that Google.com sold search is by having a lot of data, all the search queries that everybody's asking, and all of the content on the internet. One of our competitors’ founders is from Google, and had this idea of creating a cross-customer model, basically combining everyone's data, running the service as a public cloud offering, and solving relevance that way. That is obviously completely unacceptable to anybody who actually cares about security. So we built this. It's fully within your control. Nothing goes back to us. You control the networking, you can audit that nothing goes to the public internet – it's completely audible as well.

Q: If we need custom connectors, are they included in the licensing and how long does it take?

A: As relates to custom connectors, the short answer is it depends. There are connectors that we will build, we'll end up having a few hundred that we build and support ourselves. If there is a system that, for instance, is homegrown (like a homegrown ticketing system that we haven't seen before), we may charge for that. But we're also a heavily partner focused organization. So we have a network of partners including Carahsoft and some of those partners very much like that custom development work. They may have built the application in the first place and know how it works. And we will provide an SDK to make it as painless as possible to build this, understand the permissions, and stream the data into your Atolio deployment.

Q: Which specific federal systems (SharePoint, Google Drive, legacy databases, etc.) does Atolio support out-of-the-box?

A: We support all of the major providers, so all Microsoft systems, Google, Atlassian, Salesforce, ServiceNow, and so on. And we're building more connectors all the time.

Q: If we're hosting, what is the recommended infrastructure (compute, storage, database size, etc.)?

A: That's a little bit of a “how long is a piece of string” kind of question. It really depends on the size of deployment, the amount of data, etc. The underlying data index is a replicated multinode system so the typical deployment starts at six or eight nodes for the whole cluster and moves up from there. But we'd really need to talk about how much data, how many people concurrently use it as to how large that cluster needs to grow.

Gareth and I were early engineers at Splunk, and we saw a pretty similar issue with Splunk: it depends how much data is being generated, how many people are running concurrent queries, and so on and so forth. So we have some basic metrics in terms of how many people, how much data, and how much usage, we can pretty quickly get to a recommended architecture. And again, from a security perspective, it's everything all the way down to air-gapped, so GovCloud, major private cloud, or air-gapped, or physical hardware – whatever it is that you're comfortable with.

Conclusion

The challenge of unlocking institutional knowledge trapped across disconnected systems isn't new, but sovereign AI enterprise search has finally made it solvable without forcing a trade-off between intelligence and security.

For federal agencies and public sector organizations evaluating AI vendors, three non-negotiables determine whether a solution is a mission asset or a compliance liability.

- First, Sovereign AI and Data Control: the AI must come to your data, not the other way around. No data egress, no public cloud exposure, ever.

- Second, Contextual Intelligence: answers must be personalized based on who is asking, who they work with, and what they are authorized to see at that moment.

- Third, Zero-Trust Permissions: access controls must be enforced at query time, mirroring document-, object-, and system-level permissions from the source – not applied as a UI-level filter after the fact.

Atolio is the only AI-powered enterprise search platform that satisfies all three requirements. It deploys fully self-hosted within your existing infrastructure – GovCloud, air-gapped environments, or physical hardware – with zero data exposure to Atolio or any public cloud. It is the only solution in its category that sits at the intersection of full self-hosting and granular, real-time permissioned access across every connected system.

Federal agencies that build on a secure AI foundation today will be better positioned to accelerate mission delivery, close workforce knowledge gaps as experienced personnel transition out, and turn fragmented institutional data into a decisive operational advantage.

Book a call with our team to discuss your agency's deployment requirements, or watch a demo of Atolio to see our sovereign AI enterprise search in action.