Why Regulated Enterprises Can't Use Most AI Search Tools, And What Actually Works

There's a massive, largely invisible problem in enterprise AI adoption: an enormous segment of the global economy wants AI-powered enterprise search, but legally cannot buy most of what the market offers.

Banks. Governments. Defense contractors. Healthcare systems. Pharmaceutical companies. Together, they represent hundreds of billions in addressable spend – and most of them are locked out by a single architectural flaw baked into the way Silicon Valley builds software.

That flaw is SaaS-by-default. And for regulated industries, it's not just inconvenient. It's often illegal.

The Enterprise Search Market Is Booming. Most of It Is Inaccessible to Regulated Buyers.

According to Mordor Intelligence’s latest analysis, the global enterprise search market is valued at $7.47 billion in 2026 and is projected to reach $11.66 billion by 2031, growing at a 9.31% CAGR. Demand is being driven by the rise of retrieval-augmented generation (RAG), conversational AI interfaces, and the explosive growth of unstructured enterprise data – emails, contracts, patents, research files, communications – that keyword search was never built to handle.

As it becomes more ubiquitous and accessible than ever, AI-powered enterprise search is no longer a nice-to-have. It's a core productivity tool. The question isn't whether enterprises need it: it's whether they can legally deploy it.

For industries like BFSI (banking, financial services, and insurance) – which alone commands nearly 25% of enterprise search market share – and healthcare, which is the fastest-growing vertical, the answer under most vendor architectures is: no.

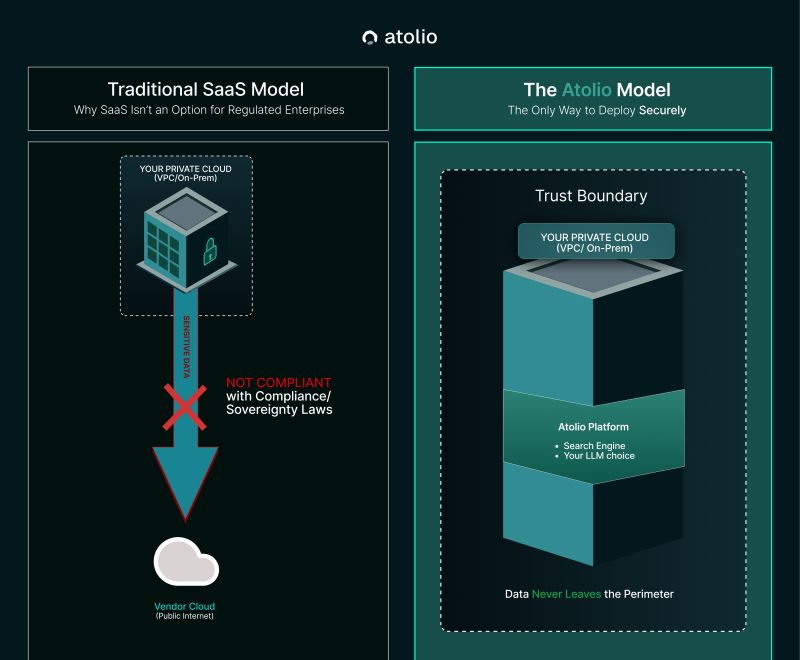

Why SaaS AI Search Doesn't Work for Regulated Industries

Before writing a line of code, Atolio spoke with 762 enterprises across regulated industries. The finding was consistent: any AI solution that touches sensitive internal data – CEO communications, unfiled patents, product roadmaps, client PII, clinical records – has to stay entirely within the client's environment.

Standard SaaS AI search architecture does the opposite. Data leaves your environment, travels to the vendor's cloud for indexing and processing, and often lives there. For a growing share of global enterprises, that model creates three categories of hard blockers:

1. Data Residency Laws

Countries including France, Germany, and Australia have enacted strict data sovereignty legislation. In some cases, data cannot merely stay within national borders: it must remain on specific certified infrastructure. No SaaS pipeline, regardless of vendor reputation, satisfies these requirements.

This is why sovereign-cloud mandates are now recognized as a structural driver of on-prem and hybrid enterprise search architecture, particularly across Europe, Asia Pacific, and the Middle East.

2. Industry Regulation

In financial services, defense, and healthcare, sensitive data cannot leave the VPC – full stop. A top-tier bank cannot pipe client PII into a third-party SaaS API. A defense contractor operating on classified networks cannot route queries through a public cloud endpoint. An airgapped AI deployment isn't a preference for these organizations; it's a legal and operational requirement.

Data security and privacy concerns are now identified as the single largest restraint on enterprise search market growth globally, estimated to suppress CAGR by approximately 1.7 percentage points, a drag that falls almost entirely on buyers who want to adopt but can't under conventional architectures.

3. IP Protection

For pharmaceutical companies, entertainment studios, and technology firms, proprietary content isn't just valuable, it is the business. Exposing trade secrets, unpublished research, or competitive strategy to an LLM provider or third-party cloud storage infrastructure represents an unacceptable risk. The concern isn't hypothetical: any SaaS intermediary with access to your content corpus has structural access to your competitive advantage.

The Only Architecture That Actually Works: Code Goes to the Data, Not the Other Way Around

Standard AI search vendors ask you to send your data to their cloud. Atolio inverts the model entirely.

From connectors, search index, LLM orchestration, and enterprise RAG pipeline, Atolio deploys its full stack inside your environment. Whether that's a VPC on AWS, Azure, or GCP, or an on-premises data center, the entire platform runs within your trust boundary. Data never crosses a border, never leaves your perimeter, and never touches Atolio's servers.

This is what secure internal RAG actually means in practice. Not a privacy policy. Not a data processing agreement. A deployment architecture where the question of data leaving your environment simply doesn't arise, because it architecturally cannot.

The result is an enterprise knowledge management platform that delivers the full capability set of modern AI-powered enterprise search – semantic retrieval, context-aware answers, permission-aware access controls, conversational interfaces – without any of the compliance exposure that comes with SaaS.

What This Unlocks: AI for the Industries That Need It Most

This architecture is why Atolio is able to work with organizations like the U.S. Air Force, and why highly-regulated industries that had written off enterprise AI as legally out of reach are now deploying it.

Consider what's now possible:

- Financial services can deploy an enterprise LLM that surfaces Basel III audit materials, client communications, and risk documentation without violating data residency or PII handling requirements

- Defense and government can run self-hosted, airgapped AI on classified or sensitive networks with no external data egress

- Healthcare can give clinicians context-aware, permission-aware access to patient records, research, and clinical guidelines, all within the compliance perimeter

- Pharma and IP-intensive businesses can index their most sensitive content for enterprise knowledge discovery without exposing it to third-party infrastructure

This isn't a niche use case. It's a foundational requirement for a significant share of global enterprise spend, and it's one that the SaaS-default market has systematically failed to serve.

The Broader Shift: Enterprise RAG Requires Deployment Flexibility

The rise of retrieval-augmented generation is reshaping the enterprise search market. RAG systems (which ground large language model outputs in verified enterprise content rather than generating answers from parametric memory alone) are now the architecture of choice for enterprise knowledge management. They collapse the boundary between search, chatbot, and document intelligence into a single context-aware layer.

But RAG is only as trustworthy as the deployment model it runs on. A RAG pipeline that indexes your most sensitive content and routes queries through a third-party cloud is not a secure internal RAG system, it's a SaaS product with a different name.

True enterprise RAG for regulated industries requires:

- Full on-prem or self-hosted deployment within the customer's VPC

- Permission-aware retrieval that respects existing access controls at query time

- LLM flexibility: the ability to bring your own LLM or run open-weight models within your environment

- No external data egress under any circumstances

This is the architecture Atolio was built around from day one: not as a compliance checkbox, but as the only viable path to deploy enterprise search at all for a large and underserved segment of the market.

The Market Opportunity Nobody Is Serving (Until Now)

The enterprise search market is projected to grow at nearly 10% annually through 2031. The fastest-growing drivers include conversational AI search, enterprise RAG adoption, and, critically, sovereign-cloud mandates that are reshaping deployment architecture globally.

These tailwinds do not benefit vendors locked into a SaaS model. They benefit vendors who can meet enterprises where their data actually lives.

Banking, financial services, and insurance. Healthcare and life sciences. Government and defense. These are the verticals where AI-powered enterprise search delivers the most transformative productivity and knowledge management gains – and they're the same verticals that have been systematically excluded by conventional deployment architecture.

Atolio was built to close that gap.

Deploy Enterprise Search Where Your Data Actually Lives

If your organization operates under data residency requirements, handles regulated data, or simply cannot afford the IP and compliance risk of SaaS AI, Atolio's self-hosted, on-prem enterprise search platform is built for you.

Full-stack deployment inside your VPC. Your LLM choice. Permission-aware, context-aware enterprise knowledge management. Data that never leaves your perimeter.

Learn more here.