Most enterprises didn't set out to have a fragmented AI strategy. They made a series of individually reasonable decisions – upgrading Slack, adding Copilot, enabling Gemini in Gmail, turning on the Jira assistant – and ended up with six AI agents or in-app search solutions that, collectively, cannot answer a single question that spans more than one system.

The result is two compounding problems that most AI search vendors would rather you not think about too hard: a workflow problem that makes knowledge fragmentation worse, and a pricing model that charges you for compute you've already bought.

The Knowledge Fragmentation Problem Gets Worse With Per-Tool AI

There's a common assumption in enterprise AI procurement that more AI coverage equals better knowledge management. Deploy AI in every tool, and the knowledge problem solves itself.

It doesn't. In fact, it makes the core problem worse.

Here's what's actually happening at enterprises that have deployed five or six AI-powered tools: they've gone from digging through six systems to asking six agents. The interface changed, but the fragmentation didn't.

The enterprise search market is shifting sharply toward conversational and natural-language-processing search, which is projected to grow at an 11.43% CAGR through 2031 – faster than any other modality. The demand signal is real. But conversational interface is not the same as organizational intelligence. And the per-tool AI model delivers the former while actively undermining the latter.

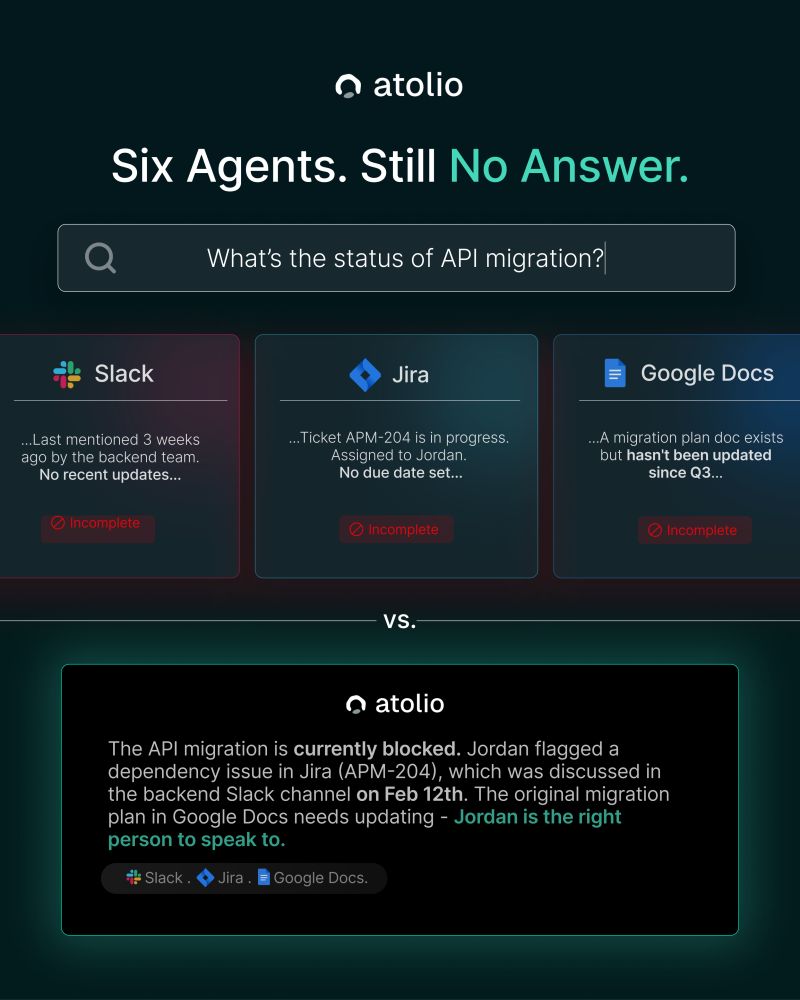

Six Agents, Still No Answer

Each AI agent (think Salesforce’s Slackbot, Microsoft’s Copilot, Gemini in Google Workspace, Jira's assistant, or ServiceNow's virtual agent) is, whether by nature or nurture, an island. It understands its own system, because that’s what it was born from and trained on. It has no – or very limited at best – concept of what exists on the other five.

Ask Slack's agent about a Jira ticket that's connected to a conversation in a Google Doc, and it cannot help. Not because the agent is poorly built; because the answer literally spans three systems, and no single-tool agent is architected to see all three. The result is "Incomplete" across the board: last mentioned in Slack three weeks ago, ticket in progress with no due date, doc not updated since Q3. Technically accurate answers. Completely useless as a whole.

What makes this worse is a behavioral consequence that's easy to miss: because each agent feels authoritative within its own system, people stop cross-referencing. The answer feels complete because the interface is fluent and confident. It isn't complete, it's just one island's answer. The trust the UX creates is precisely what makes the knowledge gap harder to detect.

What Enterprise Knowledge Management Actually Requires

True enterprise knowledge management isn't about having AI in every tool. It's about having an AI layer with universal scope: one that knows who you are, what you do – and don’t – have access to, and how information connects across the entire organization.

This is what enterprise RAG, properly implemented, actually delivers. Not a chatbot layered on a single repository, but a context-aware, permission-aware retrieval system that indexes across all systems simultaneously and synthesizes answers from wherever the signal actually lives (Slack, Jira, Google Docs, Confluence, email, SharePoint) in a single response with citations.

Retrieval-augmented generation is now recognized as one of the primary drivers of enterprise search market growth, precisely because it collapses separate question-answering systems into a single layer that sits atop existing repositories, rather than multiplying the number of systems people need to query.

The difference isn't subtle. "The API migration is currently blocked - Jordan flagged a dependency issue in Jira (APM-204), discussed in the backend Slack channel on Feb 12th, and the migration plan in Google Docs needs updating" is a different class of answer than three incomplete fragments from three separate agents. It's an answer that actually reflects how enterprise knowledge works: distributed, connected, and requiring synthesis.

The Compute Tax: Why You're Paying $400,000 for Infrastructure You Already Own

The second problem is less visible but equally significant, and at enterprise scale, it compounds fast.

A 700-person company recently received a quote of $400,000 per year for AI-powered enterprise search. That's $571 per seat for software that runs on compute they've already purchased.

This isn't an outlier. It's how SaaS AI pricing works, and understanding the mechanics explains why renewals are tripling for companies that signed enterprise AI deals just two years ago.

The Hidden Structure of SaaS AI Pricing

Most enterprises think of their AI software spend as paying for the software: the IP, the model, the product. That's not actually what's happening. SaaS AI vendors buy compute wholesale from AWS or Azure, mark it up 2-3x, and bundle it into per-seat pricing. The more your organization actually uses the product, the more you're paying for infrastructure the vendor doesn't own.

Cloud deployment currently commands 67.46% of enterprise search market share, and the vendors building on that cloud infrastructure are capturing the margin between what they pay for compute and what they charge you for it. You're not just paying for software. You're paying for software plus the vendor's AWS bill plus their margin on top of that.

The cost structure becomes especially visible when you map it against what most large enterprises have already committed. Organizations at scale typically carry cloud spend commitments: EDPs, VPC infrastructure, pre-allocated credits across AWS, Azure, or GCP. That compute is already paid for. Per-seat SaaS AI creates a parallel compute spend for the exact same infrastructure, because the vendor is running your workloads on their cloud, not yours.

The Unbundled Model: Software License + Your Existing Cloud Credits

The alternative is structurally simple: pay for the software, run it on your own infrastructure.

Atolio's model separates the software license – the actual IP, the search engine, the LLM orchestration, the enterprise RAG pipeline – from the compute. Enterprises deploy the full stack in their own VPC, using their own cloud credits. The AI capability is additive to existing cloud spend, not a new line item layered on top of it.

This isn't just a pricing preference. It's a fundamentally different business model. Most vendors don't unbundle because the compute margin is the business: the software is the vehicle for capturing infrastructure spend at scale. Atolio's business is the software. The infrastructure is yours to control.

At 700 seats, that's the difference between a $400,000/year invoice and a software license applied against infrastructure you've already allocated. At enterprise scale, and across multi-year contracts with usage-based growth built in, that gap widens significantly.

Two Problems, One Architectural Decision

The knowledge fragmentation problem and the compute tax problem are separate, but they share a common root: the SaaS model optimizes for vendor revenue, not enterprise outcomes.

Per-tool AI agents maximize the surface area of AI deployment while minimizing the depth of any single integration, because deep cross-system intelligence would require a competitor's data, and that's architecturally incompatible with per-tool SaaS. Per-seat compute pricing maximizes revenue growth as usage scales, because the vendor's margin grows with every query your team runs.

Solving both requires a different starting point. An AI-powered enterprise search platform that deploys inside your environment, indexes across all your systems simultaneously, delivers context-aware and permission-aware answers with full organizational scope, and runs on compute you already control.

Industry adoption of enterprise search correlates directly with regulatory intensity and organizational complexity: the environments where knowledge fragmentation is most costly and where the limitations of per-tool AI agents are most acute.

That's the market Atolio was built for. Not six islands with six agents. One platform that knows how your entire knowledge base connects, deployed on infrastructure you already own.

Stop Paying Twice. Start Getting Answers.

If your enterprise is carrying cloud credits, VPC infrastructure, and a growing roster of per-tool AI agents that still can't answer cross-system questions, Atolio's self-hosted enterprise search platform is built for exactly this situation.

Software license. Your cloud. Your data. One answer.

See the platform in action or book time with our team to learn more here.